Beyond Vibe Coding

The daily loop that turns AI-assisted development into AI-native operations

Everybody is talking about vibe coding - just describe what you want, let the AI build it, iterate by feel. And it works. For prototypes, for greenfield features, for the first version of something.

But vibe coding stops at the end of the chat session. The AI doesn’t remember what it built yesterday. It doesn’t know whether the thing it shipped last week actually worked. It can’t improve a process it has no memory of running.

I’ve been working with what I think comes next. In February I wrote about my AI-native development process - the operating loop, the planning docs, continuity as an engineering artifact. I described a daily report command as “the studio’s heartbeat.” That was one month ago. A lot has changed since.

What it’s become - across four of my products, refined every single day for a month - is a self-improving daily operating system run by an amnesiac operator. The agentic system runs the full diagnostic, produces the analysis, and then I ask it to improve the system it just operated within. Every morning. The process gets better every day. Not in theory. In commits.

I want to describe what this thing actually is now, because I think it might be more important than any of the products it operates on. Or maybe I’m just excited.

What a morning actually looks like

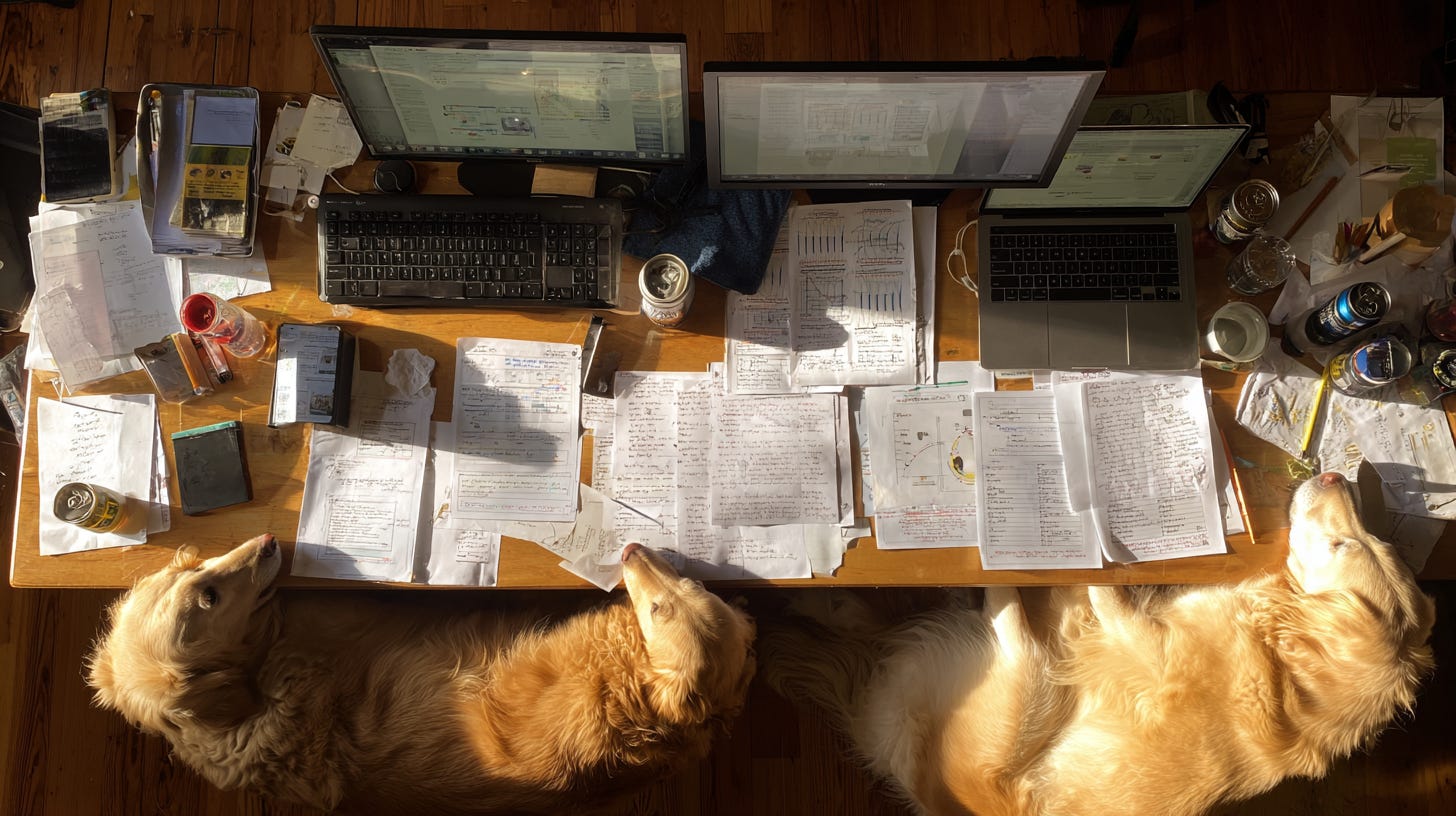

I type /daily and walk away to feed the dogs.

By the time I’m back, my team has done this: queried the production database across 8 parallel connections, pulled analytics from Amplitude, evaluated content quality across five dimensions, scored 18 pieces of generated content against a rubric, checked the outreach pipeline, compared everything to yesterday’s numbers and last week’s numbers, and assembled it all into a prioritized to-do list.

From scratch. Because it doesn’t remember yesterday.

That’s FrameDial. Seven parallel extractions fan out across Postgres, Bluesky, SendGrid, and Amplitude in 15 seconds. Scripts pre-score every story on five editorial dimensions, flag which ones need the agent’s judgment, and hand it a compact brief instead of raw data. One subagent produces the editorial review, social review, outreach report, and prioritized to-do. Wall time: 5-7 minutes. (It used to be three subagents and 10+ minutes. The retrospectives killed two of them.)

BloomEDU fires 27+ Amplitude queries in batches of 3, tracks every recommendation with a unique ID, carries forward evolving insights with daily annotations, and auto-resolves yesterday’s watch list items against current data overnight.

Agentic News runs 8 parallel database queries through 15+ renderers and produces six documents in a single atomic commit: usage report, content audit, pipeline review, outreach report, Slack update, and a consolidated to-do. The agent reads a 110-line analysis brief instead of the full 600-line report - every number pre-computed, every anomaly pre-flagged.

BuddhaUR queries MongoDB and fires 16 Amplitude API calls in two batches, auto-generates the full report with trend detection and anomaly flagging, and hands the agent a 40-line summary to review. That pipeline went from 45 minutes of manual queries to 3 minutes automated - in 18 working days of daily retrospectives.

Four products. Four /daily commands. Same underlying architecture. Each one custom-fitted to what that product needs to measure and decide. None of them finished. All of them better than they were last week.

In my February post I described the operating loop as:

name the problem

design the data

build continuity

ship thin slices

measure honestly

refactor aggressively

That’s still right. But the daily operating system is what makes “measure honestly” and “refactor aggressively” happen by default instead of by discipline. The system does the measuring. And then it helps me decide.

The pattern that keeps emerging

I didn’t design this architecture top-down. It emerged from doing the same thing every day and noticing what worked. But looking across all four products, the same three layers keep showing up.

Layer 1: Mechanical extraction.

Scripts pull data from databases, APIs, and prior reports. Everything lands in a workspace as pre-rendered tables and compact analysis briefs. This is deterministic - no LLM involved. Agentic News runs 8 parallel database queries and pipes them through 15+ renderers. BloomEDU fires 27+ Amplitude API calls in batches of 3. FrameDial fans out across seven parallel extractions and produces ~20 workspace files in 15 seconds. BuddhaUR hits MongoDB and Amplitude in parallel, runs trend detection and anomaly flagging on the raw numbers, and spits out a pre-analyzed report draft. The numbers differ but the pattern is identical: fan out, collect, pre-compute, land in files.

Why pre-render? I covered this in the February post as the “context rot” problem - long chats are where clarity goes to die. But it goes deeper than chat length. The full daily report for Agentic News is 700+ lines with 50+ data tables. No LLM context window can hold all the raw data and produce quality analysis simultaneously. So the scripts do the data wrangling and hand the agent a compact brief - maybe 80-100 lines - with the key numbers pre-extracted and the analysis tasks clearly defined.

In February I described this as “automate the mechanical.” That’s still the principle. But the implementation has gotten specific enough that it’s worth naming: the fill-in-place pattern. The draft has <!-- WRITE id=”executive-summary” --> markers with specific instructions - what to look for, what data to reference, what the task is. The agent reads a compact brief and fills in structured analysis. Not “analyze this data” (which gets you generic observations) but “write 2-3 sentences interpreting the retention trend, looking specifically for whether build 248 moved the activation needle, referencing these three numbers.”

Constraints make the LLM useful. Open-ended prompts make it verbose.

Layer 2: Constrained LLM analysis.

The agent reads the brief and writes structured analysis into predefined slots. Its job is interpretation and judgment - connecting dots across metrics, identifying what changed and why it matters, recommending what to do about it. One product uses the fill-in-place markers. Another uses structured JSON returns. A third auto-generates narrative sections with specific analysis functions: find the metric with the largest delta, check if it crossed a threshold, lead with it.

The key insight from running this daily for months: the agent gets dramatically better when you tell it exactly what to look for. FrameDial’s editorial subagent scores stories on five specific dimensions (specificity, analytical depth, evidence grounding, frame distinctness, tone) plus two binary checks (does the emphasis reframe how you think? would someone share this?). That rubric didn’t exist on day one. It emerged from weeks of daily retrospectives where the agent and I noticed what “good” and “bad” actually meant in our specific context.

Layer 3: Continuity infrastructure.

This is the part I underestimated in February. I talked about continuity as decision logs, gotchas lists, architecture snapshots. That’s the foundation - but it’s passive. What I’ve built since is active continuity: systems that carry forward not just context but commitments, decisions, and unresolved questions.

Each product has evolved its own version:

FrameDial uses an open issues tracker - a JSON file where each issue has a title, hypothesis, what’s been tried, instance history, and escalation rules. The extraction script auto-ticks “days open” every morning. When the threshold hits, priority auto-escalates from P2 to P1. But it goes beyond issues. Yesterday’s reviewer writes a structured handoff - what drove the grade, what to watch tomorrow, what self-corrected - and the extraction script weaves it into a briefing narrative the next agent reads before touching anything else. When a content quality dimension drops, the briefing searches the 7-day history and reports: “specificity was last at this level on March 24, bounced to 4.00 the next day.” The agent doesn’t need to wonder if it’s a trend. The data tells it.

BuddhaUR uses a central state file that carries a weekly narrative, known insights with review dates, active experiments with baselines and signal dates, and narrative overrides that suppress false alarms on expected dips. Action items open 5+ days are flagged STALE. Experiments past their signal date get auto-compared against baseline metrics and flagged READY TO EVALUATE. The recommendation engine cross-references completed actions so it doesn’t re-recommend things that already shipped. The whole continuity system went from nothing - no cross-session state beyond git history - to this, in 18 working days. Each day’s retrospective produced 3-6 concrete improvements that got implemented immediately.

BloomEDU uses a strategic context file that tracks five dimensions of continuity: active experiments with hard evaluation deadlines, open recommendation context explaining why each item is still open, evolving insights that accumulate daily observations and update their hypotheses as interventions land, recent decisions with rationale, and qualitative notes from outreach replies. Every recommendation gets a unique R-ID and a status: OPEN, IN PROGRESS, DONE, DEFERRED, or REJECTED. A sync script matches R-IDs across reports so nothing drifts. Watch list items auto-resolve against current data - if yesterday the agent said “watch D7 retention in the March 22 cohort,” and today’s data shows it held, the system resolves it before the agent even reads the report. The cycle closes completely: data identifies signal → agent writes recommendation → gets tracked with an ID → next day’s report shows what’s open and what was decided → agent acts accordingly.

The principle across all of them: you’re not storing memories. You’re storing decisions, commitments, and unresolved questions in a format a fresh agent can act on without any prior conversation. The agent doesn’t need to remember. It needs to read.

The four prompts that make it compound

Everything above is the operating system. But an operating system that doesn’t improve is just a runbook. What makes this compound is four prompts I run after every daily cycle. Same prompts, every day, across all four products.

Prompt 1: Process retrospective. After the full pipeline finishes, I ask the agent:

Great work. Before we continue - from your experience working through that process today top to bottom, is there anything you’d recommend we improve? New scripts, revised templates, removed steps, new files, consolidated processes? Split your recommendations into two buckets: quick wins we should implement right now (< 5 minutes each), and structural improvements to track for a focused session. Remember what it was like to come to this task with no context - what would have made your cold start faster or your analysis sharper?

The “no context” line matters. The agent just experienced the cold-start problem firsthand. It knows which files were confusing, which steps felt redundant, which data was missing when it needed it. And the two-bucket split prevents a common failure mode I hit early on - implementing ten recommendations when three of them were quick fixes and seven required real architecture work. Now the quick wins get shipped immediately and the structural changes get tracked without blocking the day.

This prompt is responsible for most of the architectural improvements in the system. The fill-in-place pattern came from a process retrospective. So did consolidating three subagents into one. So did monitoring counters. So did pre-scored scaffolds that let the agent skip deep-reading 60% of stories. Each of those changes made the next day faster and better. Then the next retrospective found the next improvement. Compounding.

Prompt 2: Continuity audit.

Now read the prior 3 days of reports and tracking files. I want you to look for specific gaps: decisions that were made but aren’t reflected in our tracking files. Recommendations that appeared more than once without resolution. Metrics that moved significantly without investigation. Context from previous days that would have changed your analysis today if you’d had it sooner. What would you add, change, or restructure in our continuity files so that tomorrow’s agent starts sharper than you did?

Early versions were vague - “is there anything you wish you knew?” - and produced generic observations. Telling it exactly what to look for produces specific structural improvements. The open issues tracker with auto-escalation was born from this prompt. So was the recommendation lifecycle. So was the “yesterday’s unchecked items” extraction that carries forward unfinished work.

Prompt 3: Strategic prioritization.

Based on everything in today’s reports, our open issues, and our goals - what are the most impactful things we could do today? For each one: what specifically would we learn or ship, what does it unblock, and what’s the cost of waiting another day? Organize them as Now (do today), Next (this week), and Later (track but don’t start yet).

This transitions from review to execution. The key instruction: “what’s the cost of waiting another day?” It forces the agent to think about sequencing, not just ranking. Some days there’s one Now item and eight Laters. Some days there are six Nows. The structure makes the tradeoffs visible so I can push back on the right things.

Prompt 4: Documentation hygiene.

Before we close out, run through this checklist: (1) Do our tracking files - open issues, recommendations, action items - reflect every decision and outcome from today? (2) Does our project documentation match the current state of the code after today’s changes? (3) Are there any to-do items we’re carrying forward that should have completion criteria or deadlines added? (4) Is there anything we did today that tomorrow’s agent won’t know about unless we write it down right now?

Early versions said “do a once-over on our documentation” - too open-ended, inconsistent results. The checklist addresses four real failure modes: untracked decisions, stale docs, vague carry-forwards, and invisible work.

This closes the loop. Tomorrow’s fresh agent inherits whatever state we leave behind. This prompt ensures it’s clean.

Four prompts. Maybe 20 minutes total. And they’re the reason the system compounds instead of just repeating.

Sometimes the daily loop surfaces questions the data can’t answer - what does good retention intervention look like for this category of app, or why have content quality scores plateaued. That’s when I reach for structured deep research: a brief with specific context, named comparable products, concrete questions, and real constraints (solo developer, React Native, 200 users - so the research doesn’t recommend things I can’t do). The key discipline is specificity. “How do successful apps handle daily engagement streaks?” produces useful findings. “What are best practices for daily engagement?” produces platitudes. The findings feed back into the loop - research informs a decision, the decision becomes a tracked action item, tomorrow’s report measures whether it worked. The system absorbs external knowledge the same way it absorbs internal data.

What this changes about how I work

In February I wrote: “I currently keep 6-10 projects in active motion every day inside a portfolio of 20+.” I described planning docs and continuity systems as how I keep context switching cheap. That’s true - but the daily operating system is why I can run multiple serious products simultaneously without any of them drifting.

When I ship a change to the content pipeline, I know tomorrow morning whether it worked - not through gut feel, but through scored quality dimensions compared against yesterday’s scores. When I run an outreach campaign, I see the full funnel from email sent through trial signup through conversion, broken by segment and A/B variant, compared week over week. When a metric moves, I know about it within 24 hours.

And the path isn’t always linear. Some days the retrospective produces a hypothesis worth testing, not a change worth shipping. FrameDial currently runs A/B tests on editorial scoring weights - does a higher specificity threshold produce stories people actually share more? BuddhaUR tests outreach variants against conversion by segment. The daily loop doesn’t just measure before and after. It measures A against B, with the same infrastructure, and the continuity system tracks which variant is running so tomorrow’s agent knows what it’s looking at. Not every improvement is an iteration. Some are experiments - and the system has to carry both branches until the data picks a winner.

I’m not making decisions in the dark. And I’m not spending my time pulling data and formatting reports. The agent does that. I spend my time on judgment: is this the right metric? Is this recommendation addressing the root cause? Should we ship this or wait for more signal?

In February I drew the automation boundary: “automate the mechanical, keep judgment human.” The daily operating system is the fullest expression of that principle I’ve found. The agent handles everything on one side of the line. I handle everything on the other. And the four prompts ensure the line itself keeps moving - automating more of the mechanical work each day so more of my time goes to judgment.

But I want to be honest about what’s still hard. The system is fragile in ways that aren’t obvious. A schema change can break three renderers. An API rate limit change cascades through data extraction. The meta-loop helps catch these, but it doesn’t prevent them.

And there’s a subtler problem. When you have this much data flowing through a daily review, you can start optimizing for the metrics instead of the thing the metrics measure. The system is good at telling you what happened. It’s less good at telling you whether you should care.

That’s still my job.

The open question

I keep going back and forth on what this is. Is there a product here - a framework that other founders could use? Or is this just what competent AI-native operations will look like in two years, and everyone will do some version of it?

The pattern is definitely generalizable. Three layers - mechanical extraction, constrained LLM analysis, continuity infrastructure. Four daily prompts that make the system self-improving. A structured way to bring in external research when internal data isn’t enough. Slash commands, workspace patterns, tracking file specifications. All of that could be packaged.

But the power is that it’s custom-fitted. FrameDial’s editorial scoring rubric doesn’t transfer to a meditation app. An outreach pipeline for a wildflower learning app looks nothing like one for an AI news service. The general pattern transfers. The implementation doesn’t. And the cadence depends on your signal volume. Daily works for me because four products generate enough data overnight to make each morning’s analysis meaningful. A pre-launch product with 20 beta users might get more signal from a weekly deep-dive than a daily skim. The rhythm should match the data, not the calendar.

Maybe the product is the pattern itself - a guide that says: here are the layers, here are the prompts, here’s how to think about continuity infrastructure, now build your own version. Less template, more architecture.

Or maybe it’s simpler than that. Maybe the insight is just: if you work with an AI agent every day, ask it to improve the process you’re working through together. Not occasionally. Not when something breaks. Every single day. The agent experienced the cold-start problem this morning. It knows what was missing. It knows what was redundant. It knows what would make tomorrow better.

You just have to ask.

The products

I should be honest: none of these are finished. They’re good - three of the four have paying subscribers who keep renewing, which means the core value is landing. But they’re B+ on a good day, still finding their legs.

Some real numbers. BuddhaUR holds 40% of users at 30 days. Agentic News and FrameDial Daily both run 50%+ open rates. Agentic gets 6%+ click-through. FrameDial clicks are lower - I’m starting to suspect the daily email is the product, not a funnel to the site. The daily loop will sort it out.

FrameDial - AI news that re-frames every story through competing analytical lenses instead of editorial opinion

Agentic News - Personalized AI news agents that learn what matters to you and filter the rest

BuddhaUR - A Buddhist teaching companion that adapts to your tradition and experience level

BloomEDU - A nature learning app for identifying wildflowers and building botanical knowledge

See the rest at Skylark Creations. If you try one and something's rough around the edges - yeah, I know. Check back in a week.